on

Modernizing art with Augmented Reality

The following article gives the technical explanation of how Apple’s framework for iOS, ARKit, is used to co-create the artwork “In Memory of Me”, produced thanks to the collaboration between Fabernovel and French plastic artist Stéphane Simon.

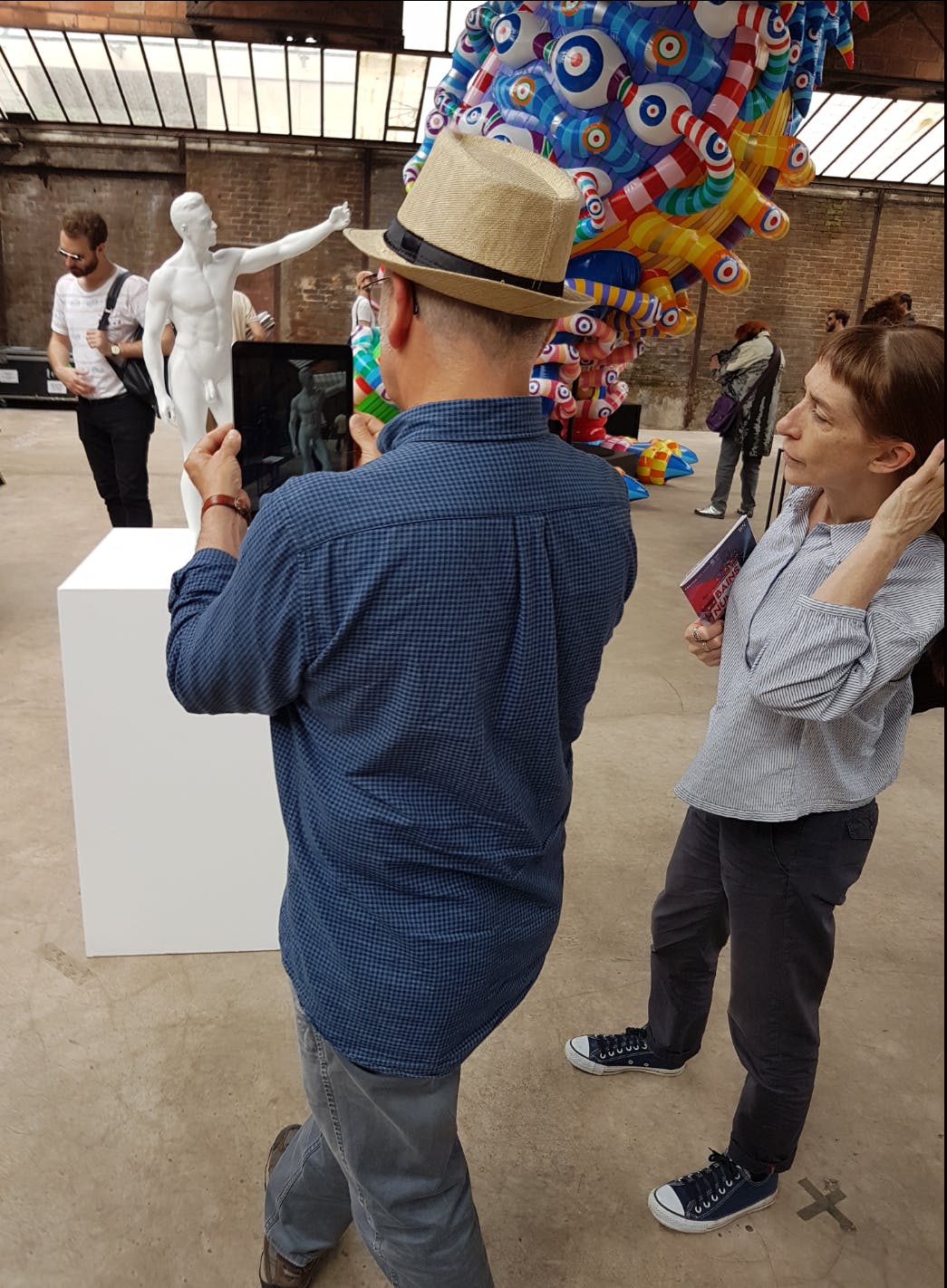

Fabernovel associated with French plastic artist Stéphane Simon for his project In Memory of Me, which consists of 3D printed statues taking selfies. To him, selfies are a fascinating daily gesture and have become a ritual of personal expression.

The collaboration aimed at taking further the concept of self portraying and observation by displaying an animated narcissus tattoo. The latter invades the statue little by little, using augmented reality, surprising the user. The artwork was showcased at les Bains Numériques from June 13th to June 17th. This biennial event has been hosted in Paris for the past twenty years for artists to explore combinations between art and digital worlds.

The following article gives the technical explanation of how Apple’s framework for iOS, ARKit, is used to achieve the following result:

The goal: materializing the apparition of the tattoo

The challenge was mostly technical: how does one map a video texture on an actual object? A “transparent” 3D model must be placed on the location of the statue. However, at the beginning, ARKit wasn’t able to recognize 3D models. Back then, image recognition appeared as the best solution to place and map the 3D model, among other techniques which are explained below.

Relying on a transparent video to keep the actual statue visible

Can one combine straight away a video on a 3D model?

The original video was in black and white. If it had been directly used under its original form, this is what the model would have looked like.

The issue: the white video zone completely obliterates the actual statue. Contrary to images, video files do not have an alpha channel. Thus, our strategy lied in using another video as a transparency mask: the black part of the video unveils what should be displayed, while the white part hides the rest. Luckily for us, since it was a black and white tattoo, the same video file could be used as the masking part.

(Shout-out to this Github project which explains how to use this technique, as well as the Chroma Keying one)

Following challenge: how to place the statue, without relying on 3D model tracking?

On June 4th, Apple introduced the ability to track 3D models in ARKit 2, as beta software. However, since this feature wasn’t available when the project started, we had to find a way for correct placement. Our trick: using Image Recognition which had been introduced in iOS 11.3. Using two images placed on both sides of the statue (left and right), the 3D model was finally correctly placed.

Otherwise, exploiting QR Codes is another way to achieve a rather similar result. Using plane recognition and Vision framework, QR-Codes are identifiable and placeable. Image Recognition is more pleasant since it doesn’t require to identify planes in the environment and allows us to use more beautiful images so as to place the statue. The more complex one’s image is, the best the image recognition works. Furthermore, Xcode, Apple’s IDE for iOS development, issues a warning in case the image isn’t complex enough.

You shall not see my back: statues are not see-through

With the former current configuration, when facing the statue, the back tattoo could be seen, while it should have been hidden by the actual body of the statue!

Our hack: using a technique called occlusion to hide further textures. Occlusion isn’t a new process, but getting to use it with real life models is still quite rare, as AR is a recent technology. This method consists of using one model to conceal the others. It starts with placing a 3D model in front of the areas we want to hide. This model’s texture masked the textures of the other 3D models behind it, as seen below.

A naive resort to occlusion is to place a statue with a concealing texture exactly where the 3D model is. But this could not work properly since the textures from both the video model and the occluding model would have conflicted during rendering.

Our hack, step 2: we thus needed to place the occluding model slightly behind the video model. We reached our goal by using the direction based on by the viewer’s device and the video node positions.

Creating the occluding statue:

let occlusionClone = statueNode?.clone()

occlusionClone?.geometry = statueNode?.geometry?.copy() as? SCNGeometry

let occlusionMaterial = SCNMaterial()

occlusionMaterial.colorBufferWriteMask = []

occlusionClone?.geometry?.materials = [occlusionMaterial]

sceneView.scene.rootNode.addChildNode(occlusionClone!)

// This ensures occlusionNode is rendered first in order to hide what is

// behind it

occlusionClone?.renderingOrder = -1

statueNode?.renderingOrder = 10Placing it correctly:

func renderer(_ renderer: SCNSceneRenderer, updateAtTime time: TimeInterval) {

guard let camera = sceneView.pointOfView else { return }

// normalized() is defined in a SCNVector3 extension

// https://github.com/apexskier/SKLinearAlgebra can provide one for instance

let direction = (camera.position - statueNode.position).normalized() * 0.0005

occlusionNode.position = SCNVector3(

statueNode.position.x - direction.x,

statueNode.position.y,

statueNode.position.z - direction.z

)

}

Keeping the current world tracked: the product is done, how to maintain an uninterrupted experience?

Once the animation was finished, we did not want to have to use the images again to place the statue. If the application is left running, there will be no issues, as long as the camera of the iPad is not placed face down. As a matter of fact, ARKit relies on its accelerometer, gyroscope and camera as to place the device in the world it is observing. If the camera doesn’t see anything, the ARSession will eventually fail. So using a stand when the iPad is resting is recommended to prevent any interruption of the ARSession.

This issue is also resolved by ARKit 2 which allows to persist AR experiences and hence allows to relaunch an experience by recognizing an environment it has already encountered before.

Conclusion

The final result is satisfying. But sometimes, due to the limitations of ARKit 1 which cannot properly recognize a 3D model, the model isn’t placed correctly when moving around the statue. A new version of the app will be released in the future, allowing to properly track the 3D model of the statue, thus removing all the annoying setup part with the two images and fixing the model placement.

People who experimented the app were pleasantly surprised. It was exciting to see that from their own initiative they were moving around the statue to see the tattoo from all possible angles, showing that AR is definitely a medium allowing to explore our senses in unthought ways.